This post is part of an ongoing blog series where Scaled Agile Partners share stories from the field about using Measure and Grow assessments with customers to evaluate progress and identify improvement opportunities.

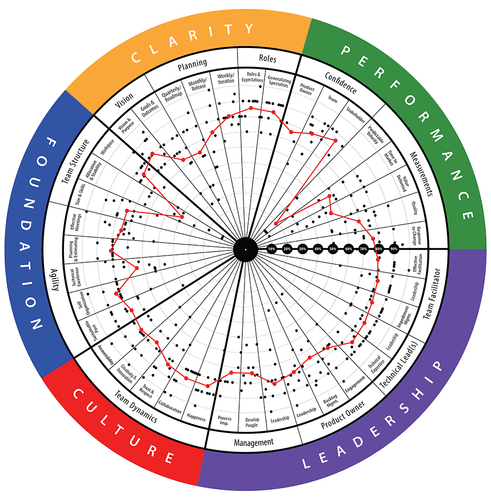

At AgilityHealth®, our team has always believed there’s a correlation between qualitative metrics (defined by maturity) and quantitative metrics (defined by performance or flow). A few years ago, we moved to gather both qualitative and quantitative data. Once we felt we had a sufficient amount to explore, we partnered with the University of Nebraska’s Center for Applied Psychological Services to review the data through our AgilityHealth platform. The main question we wanted to answer was: What are the top competencies driving teams to higher performance?

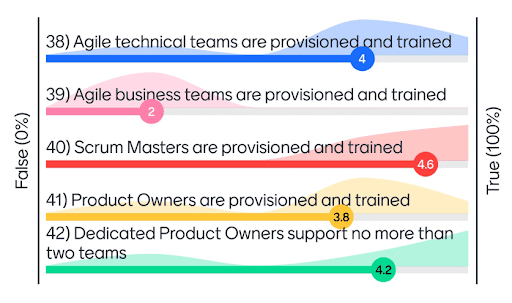

Before we jump into the data, let’s start by reviewing what metrics make up “performance.” Below are the five quantitative metrics that form the Performance Dimension within the TeamHealth® radar:

- Time-to-market

- Quality

- Predictable Delivery

- Responsiveness (cycle time)

- Value Delivered

During the team assessment, we ask the team and the product owner about their happiness and their confidence in their ability to meet the current goals. We consider these leading indicators for performance, so we were curious to see what drives the qualitative metrics of Confidence and Happiness as well.

Methodology

We analyzed both quantitative and qualitative data from teams surveyed between November 2018 and April 2021. There were 146 companies representing a total of 4,616 teams (some who took the assessment more than once) which equates to more than 46,000 individual survey responses.

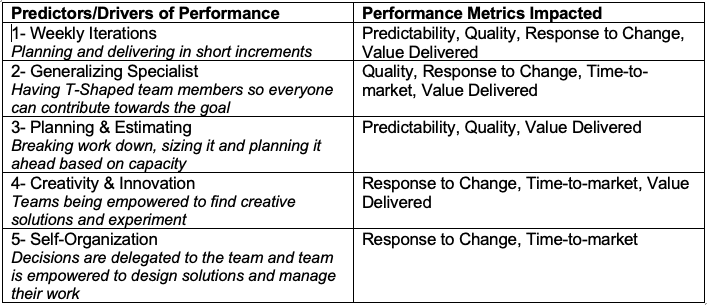

We used stepwise regression to explore and identify the top five drivers for each outcome. Stepwise regression is one approach in building a model that explains the most predictive set of competencies for the desired outcome.

The results of our analysis identified the top five input drivers for each of the performance metrics in the TeamHealth assessment, along with the corresponding “weight” of each driver. We also uncovered the top five drivers of Confidence and Happiness for teams and product owners. These drivers are the best predictors for the corresponding metrics. All drivers are statistically significant, and each metric has the driver’s ranked order.

By focusing on increasing these top five predictors, teams should see the highest gain on their performance metrics.

Results

After analyzing the top drivers for each of the performance metrics, we noticed that a few kept showing up as repeat drivers across performance.

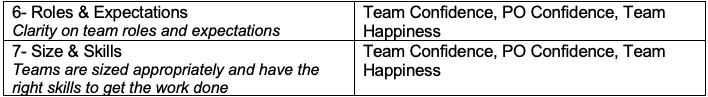

When analyzing the drivers for Confidence and Happiness, we found these additional predictors:

We know from experience that shorter iterations, better planning and estimating, and T-shaped skills all lead to better performance—but we now have data to prove it. It was a welcome surprise to see self-organization and creativity take center stage, as it did in our analysis. We’ve always coached managers to empower teams to solve problems, but for the first time, we have the data to back it up.

Recommendations

Pulling these patterns together, it’s clear that if a team wants to impact its performance in an efficient way, it should focus on weekly iterations, T-shaped team members, effective planning and estimating, enabling creativity and self-organization, role clarity, and right-sizing and skilling. Teams that invested in these drivers saw a 37 percent performance improvement over teams that didn’t. So when in doubt, start here!

We’re excited to share that you can now see the drivers for each competency inside the AgilityHealth platform. We hope it helps you make informed decisions about where to invest your time and effort to improve your performance.

Visit the AgilityHealth page on the SAFe® Community Platform to learn more about these assessment tools and get started!

About Sally

Sally is a thought leader in the Agile and business agility space. She’s passionate about accelerating the enterprise business agility journey by measuring what matters at every level and building strong leaders and strong teams. She is an executive advisor to many Fortune 500 companies and a frequent keynote speaker. Learn more about AgilityHealth at https://www.agilityhealthradar.com.

Share:

Back to: All Blog Posts

Next: How Do We Measure Feelings?