I’ve found myself in many different contexts throughout my career as a SAFe scrum master:

- Multimedia

- Instructional design

- Marketing

- Globalization

- Data analytics

Make no mistake. I am neither an animation artist nor an instructional designer, nor a digital marketer, nor fluent in a second language, nor can I write SQL (or any code for that matter).

So how do I effectively work as a scrum master when I don’t share technical experience with my teammates? I’ll help you answer that common question by focusing on three areas:

- What does a scrum master do?

- What if I’m a scrum master without experience?

- Setting scrum master improvement areas

What does a scrum master do?

This sounds simplistic, but there’s a reason! Reviewing the basics, in this case the role of scrum master, can help reaffirm your role on the team you serve and help you clearly state it to others.

Your goals are simple (not easy), and they often include:

- Helping the team navigate ART practices and processes. In doing so, the team can participate fully and have their interests, concerns, questions, ideas, and voices heard. This is especially true for new team members. Everyone will need time and support to adjust to a new way of working, no matter their experience level. Scrum masters are a little bit like the glue that holds cross-functional teams and ARTs together.

- Allowing teammates to focus on execution. As experts in their domain, your team members are usually deep in the trenches of value delivery. Most other team responsibilities are shared between you, the product owner, and the product manager. This means scrum masters need to be experts at supporting the PO, PM, and other team members at defining the why, gathering requirements, prioritizing work, and knocking on doors to unblock progress.

- Being a champion of relentless improvement. You should help define success metrics, measure the team’s value delivery, and create a forum for the group to view and discuss the results. Teams might think they’ve defined value delivery well, but scrum masters are uniquely positioned to provide essential perspectives from the ART, customers, business owners, and other teams. Aside from objective metrics, you can also discuss qualitative experiences like team dynamics. In partnership with the product owner, you can create a system to start incrementally improving. The organizational value realized from increasing and sustaining employee participation is always significant.

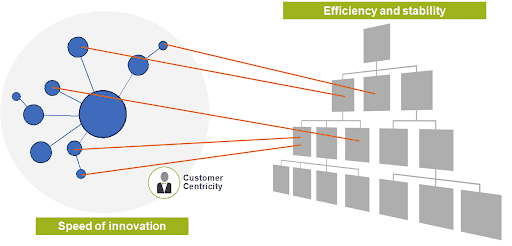

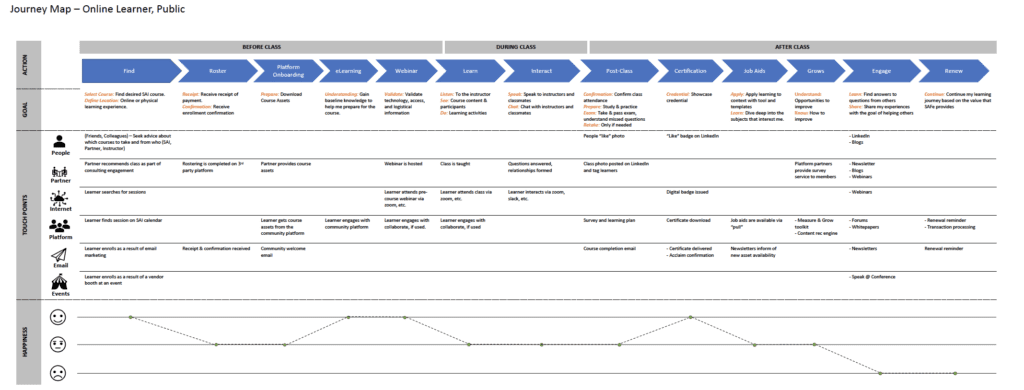

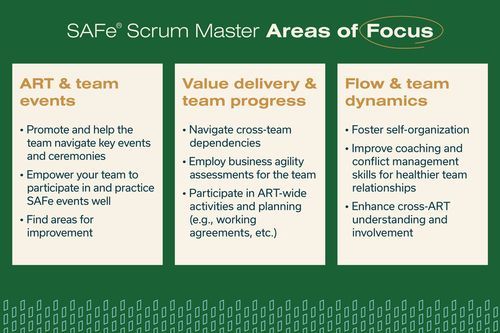

The full SAFe® scrum master article has more extensive guidance to help you define role expectations and responsibilities. As a quick reference, the image below will help you visualize three core areas where any scrum master can immediately start to add value.

Does this work require you to know what the team is making and how? Yes, to an extent. But it often doesn’t require the depth of specialized knowledge needed to build end solutions. In fact, another voice with the same experience and biases might only add to a myopic perspective and goals.

What if I’m a scrum master without experience?

Starting as a scrum master without experience is a little overwhelming.

When it feels like too much, there are some foundational concepts you can use to stay grounded and help your team succeed.

Below are three key reminders for scrum masters that are new to their role or serving an experienced team in an unfamiliar domain.

1 | The team is expert in their way, you are expert in your way

To coach a team effectively, you need to understand and maintain focus on:

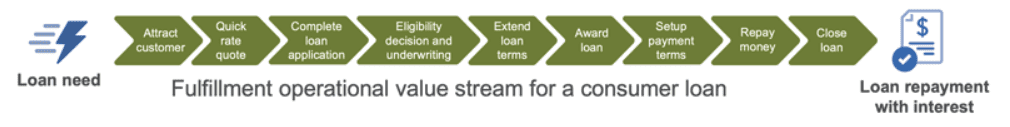

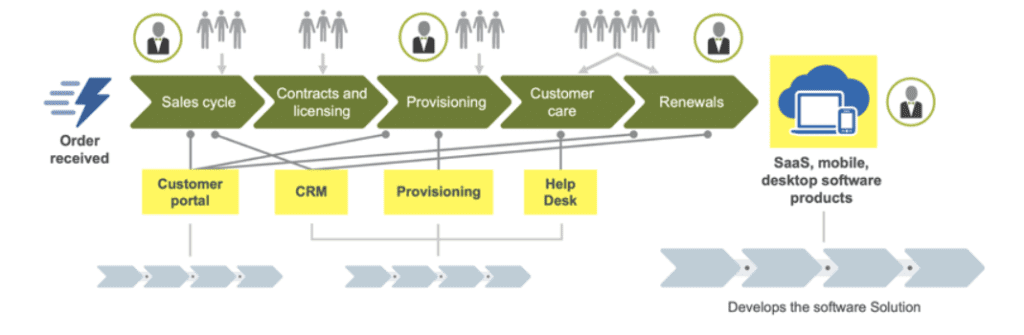

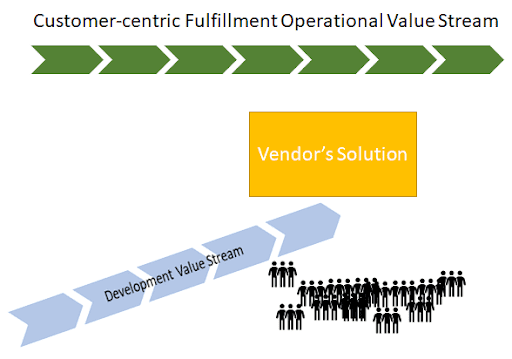

- The team’s value flow

- Typical bottlenecks

- Impediments to high quality

The rest is simply nice to have. Understanding flow, bottlenecks, and quality will allow you to quickly grasp what holds the team back and how they achieve success. This will also help you relate to your team’s emotional dynamics, including what makes them personally frustrated or fulfilled. Empathy will break through differences in experience levels and foster lasting relationships.

If you’re still skeptical, think of it this way; the product owner is backlog and content authority for the team. They still do backlog refinement with the team. Why? Because team members are the experts! That’s their thing. That’s why they were hired.

A scrum master isn’t an expert in the same areas. That’s not their job. Their job is coaching and enhancing the PDCA cycle, customer centricity, flow, dependency visualization, bottleneck identification and removal, conflict management, and listening.

2 | Build initial trust levels with authenticity

The not-so-secret ingredient in serving any team is trust. If you share technical expertise with your teammates, building initial trust may be easier. Teammates will know that you understand their impediments and have insight into root causes because you may have experienced them before. Your coaching may be well received because “you know what you’re talking about,” and teammates can immediately talk shop with you.

There may be some initial distrust if you don’t share technical knowledge with your teammates and they don’t understand how you contribute. If this situation sounds familiar, it’s best to start with openness about your background and willingness to learn. Emphasize that you’re not a technical expert but you do fill many other roles that help them work better, including:

- Servant leader

- Live-in consultant

- Advisor

- Team protector

Your expertise starts with process, method, and people.

Trust is particularly key when your work environment prioritizes honesty, candid feedback, and personal responsibility. Technical competency is a must for most roles, but emotional intelligence and interpersonal skills are vital for helping teams and individuals thrive. Organizations using SAFe should create ample space for digging into messy issues, feedback, processes, and team performance. Scrum masters can build trust in these complex emotional environments in several critical ways:

- Help everyone approach discussions in good faith

- Create a safe environment for all feedback

- Find and equip team members with the right tools and methods to provide feedback

- Encourage participation by all; not only the loudest or most persistent voices

- Communicate feedback clearly to others, demonstrating advocacy for the team

3 | Promote self-organizing teams

A scrum master’s best tools are powerful questions and intentional listening. If you share deep technical expertise with your teammates, you may have a bias when determining problems and solutions.

You might make more assumptions and be more suggestive because you have so much familiarity with the team’s work. Scrum masters without technical experience have the benefit of an outsider’s perspective and have no choice but to truly listen, clarify, and guide the team to their own solutions.

Setting scrum master improvement areas

It’s helpful to build trust and develop personal relationships. But you’ll need concrete growth goals to gain competency and confidence.

The list of scrum master improvement areas below will give you a big head start in owning your role:

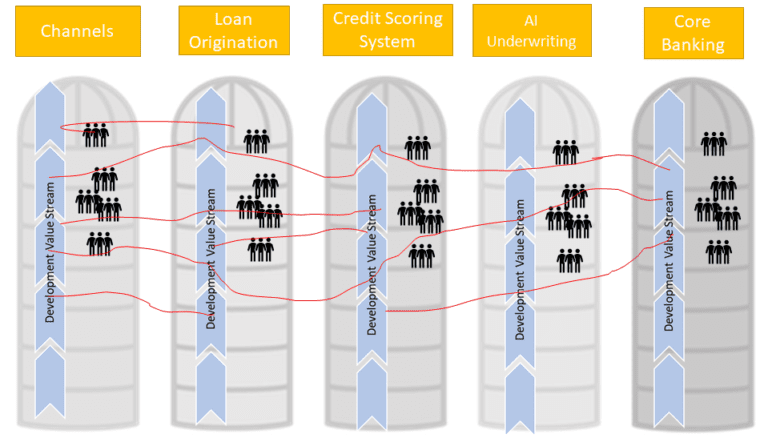

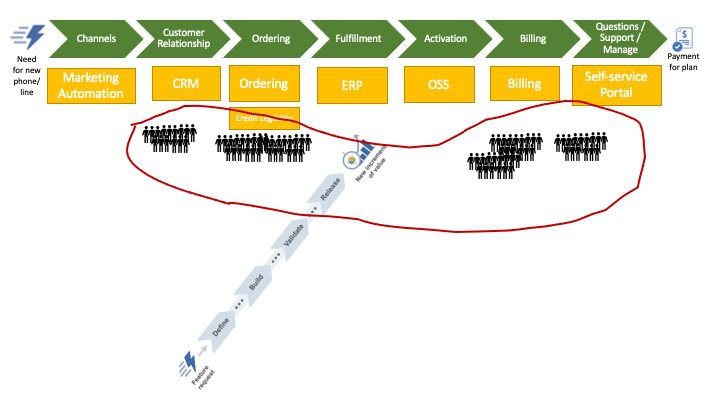

Identify the team’s value stream(s). The team might already have a value stream visualization. Maybe the product owner knows it well. Or maybe there’s a great opportunity for the team to work on identification. This will help both you and your team understand how work flows and the most essential tools and processes the team uses. You’ll likely find areas for immediate improvement!

Ask obvious questions. Though it might feel like you’re slowing the team down, asking foundational questions is actually beneficial for everyone. Here are just a few obvious benefits:

- You need to learn more about team content

- The teammate receiving the question needs to think about the purpose and processes behind their work

- Other team members who aren’t involved in that work may have the same question

Schedule one-on-one meetings. Learn team member’s professional goals and interests. Ask about their pain points, what keeps them up at night, dynamics within the team, dynamics with other teams, etc. Build empathy to help smooth over inevitable future difficulties. Also, if your teammate is comfortable with it, you can ask to shadow their work while they narrate and complete day-to-day tasks.

Always have a Google tab open. Answers to technical questions are often difficult to grasp. You can’t expect to know everything your team does. Instead of scheduling an hour lecture with a teammate every time curiosity strikes, try checking internal directories, knowledge wikis, and even Google to find a quick answer. Continuous learning is imperative.

Use an assessment to measure your progress. The AgilityHealth Scrum Master Radar Assessment (or a similar tool) can help you understand your current performance and identify areas for improvement.

Learn more about the team’s work. This shouldn’t necessarily be your first priority, but it’s definitely worth your time. Common examples include joining a lunch book club, attending a conference, creating content that requires you to learn new material, and reading technical articles. You’ll deepen your knowledge and show that you truly care about the team’s work.

Hone your craft. Consistently prioritizing professional development will demonstrate your growing expertise to the team. Whether you’re trying new approaches to retrospectives or diligently protecting and coaching team members, your efforts will earn trust.

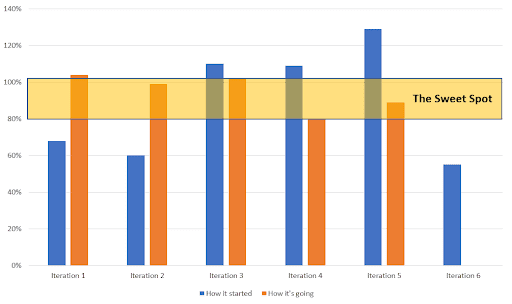

If you’re still unsure about exactly where to spend your time, the graphic below breaks down how real scrum masters (in our company) spend a typical week. You can use this tool as a gut check for balancing priorities, assessing your time management skills, and planning for a productive iteration.

More Resources for You, Scrum Masters!

Even with prior scrum master work experience, serving a team with technical expertise that you don’t have can feel daunting. But a skilled scrum master can quickly bring significant value. By clarifying how you serve the team, building trust, and continuously learning, you and your teammates can work together to build a self-organizing, high-performing team.

Here are some additional resources to help you learn more about how scrum masters of all experience levels can continue improving and serving well:

- Read about the scrum master role in improving team dynamics and productivity

- Learn how to assess your team’s agility from a scrum master Q&A

- See how scrum masters help handle conflict resolution

- Join the Scrum Master forum in the Scaled Agile Community Platform (available to current community members)

- Attend a SAFe® Advanced Scrum Master course

About Emma Ropski

Emma is a Certified SPC and scrum master at Scaled Agile, Inc. As a lifelong learner and teacher, she loves to illustrate, clarify, and simplify to keep all teammates and SAFe learners engaged. Connect with Emma on LinkedIn.